Mar 17 2026

AI Deployment

From Sandbox to Surgery: A Step-by-Step Guide to Deploying Medical AI in 2026

The premise of Artificial Intelligence in surgery moving from a fascinating concept in medical journals to a tangible presence in operating rooms. We are transitioning rapidly from the “sandbox” phase of development, characterized by exploration and proof-of-concept, to the “surgery” phase, where AI models directly impact patient care.

However, the path from a functioning algorithm to a clinically deployed surgical aid incredibly complex. For innovators developing these tools, understanding the entire ecosystem, particularly the vital, foundational stages that happen long before the AI sees its first real patient, is paramount. In 2026, the differentiator isn’t just a clever algorithm; it’s a model built on rock-solid data and integrated within a deeply compliant workflow.

This blog offers a strategic, step-by-step roadmap outlining the deployment of a medical AI model that aids in surgical planning and guidance. It highlights the indispensable role of highly accurate annotation, segmentation, and quality control, and explores the platforms and regulatory requirements that will define the healthcare landscape in 2026.

Phase 1: Clinical Question and Data Foundation

A successful AI deployment begins not with an algorithm, but with a precise clinical question. What specific surgical challenge will this AI address? Will it pre-operatively plan complex resections, intra-operatively segment critical vessels from live fluoroscopy? Or predict post-operative outcomes based on pre-op scans? This question dictates every subsequent step, especially data acquisition.

In 2026, data quality overshadows data quantity. The most powerful models are trained not on massive, noisy datasets, but on highly curated, representative, and meticulously annotated “gold-standard” data. For surgical AI, this data must come from diverse sources (CT, MRI, 3D Ultrasound, Intra-operative video) across various demographics and pathologies to ensure generalizability and prevent bias.

The point where the foundation for customized 3D models is laid. An AI can only accurately model what it has been taught to see. If the training data lacks specificity, the final surgical aid will be unreliable.

Phase 2: The Critical Link: Accurate Annotation and Segmentation

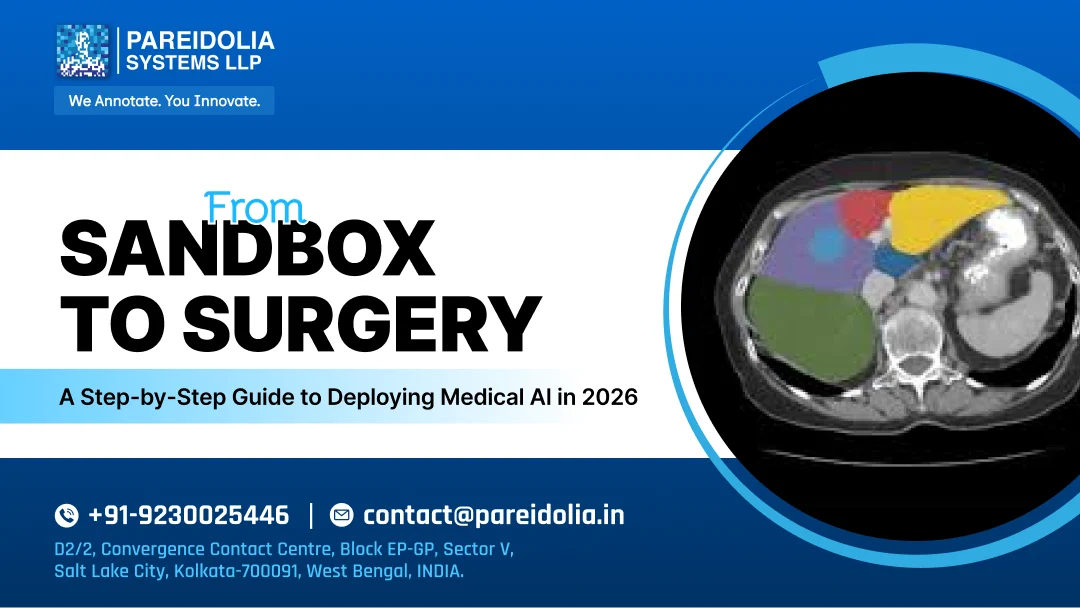

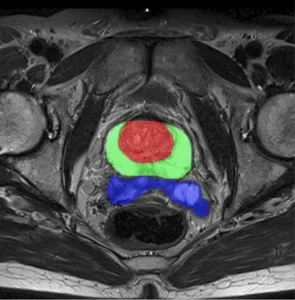

The crux of converting raw DICOM images into a surgically useful AI is medical image segmentation. This is where raw pixels transformed into anatomical reality.

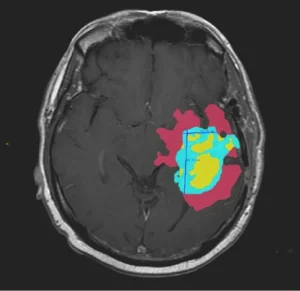

For surgical planning AI, accurate segmentation not a luxury; it is the fundamental prerequisite. If an AI is tasked with delineating a tumor for resection, a 2 mm error in boundary definition could lead to incomplete resection (endangering the patient) or excessive tissue loss (resulting in functional deficit).

The Necessity of Expert Segmentation:

Automatic segmentation algorithms have improved; however, the gold standard, especially for complex pathologies and irregular anatomies remains human-in-the-loop validation, driven by medical experts. This crucial for two main reasons:

- Context and Edge Cases: An expert radiologist or anatomist understands the nuances of anatomical variations, post-surgical changes, and subtle pathological features that an automated system might misinterpret.

- Accuracy Required for 3D Modeling: High-resolution segmentation the raw material for building patient-specific 3D models. When converting 2D segmentation to 3D, small boundary errors amplify, causing irregular surfaces, artifacts, and misaligned anatomy. This, in turn, makes the 3D-printed guides or intra-operative visualizations useless or worse, misleading.

This phase is where “sandbox” experimentation meets the rigors of clinical necessity. The AI is trained to understand complex anatomy with high precision, building the “map” that will guide the surgeon.

Phase 3: Platform Selection and Model Development

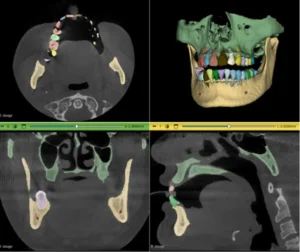

In 2026, the medical AI landscape defined by platforms that offer security, scale, and compliance. Companies aren’t building their systems from scratch; they are leveraging existing ecosystems.

The Role of Integrated Data Platforms:

A centralized Data Labeling and MLOps platform essential for managing the entire AI lifecycle. These platforms are where medical annotation teams operate. They must provide:

- DICOM Compatibility: Native support for high-resolution medical imaging data.

- Specialized Tools: 3D bounding boxes, polygon segmentation, point-cloud labeling, and volumetric segmentation tools (e.g., specialized brushes and interpolation algorithms) that designed for anatomical complexity.

- Workflow Orchestration: Seamless transfer of data from clinical sources (e.g., a hospital PACS) to the annotation team, through quality control, and into the model training pipeline.

- Integration with Regulatory Frameworks: These platforms must facilitate the necessary documentation (data lineage, annotation protocols, QC reports) required for FDA and EU MDR submissions.

Key Platforms in Use

Popular open-source platforms streamline annotation and 3D modeling workflows.

The AI model is then trained, cross-validated, and refined within this controlled data environment. The output is a robust algorithm that can segment anatomy from new, unseen data with a high level of confidence.

Phase 4: Regulatory Compliance as the Strategic Enabler

By 2026, the regulatory pathway for Medical AI (especially AI as Medical Device – SaMD) will well-defined but extremely rigorous.

Regulatory compliance is not a hurdle to jump at the end; a strategic requirement that must embedded from the very beginning.

The Regulatory Pillars for Surgical AI:

- Data Provenance and Bias Mitigation: Regulatory bodies (like the FDA or EU Competent Authorities) will scrutinize how the training data was collected, how it reflects patient diversity, and what measures were taken to prevent algorithm bias. This requires a clear data acquisition strategy and a transparent, audit-ready annotation process.

- Annotation Protocol and Personnel: There must be documented, standardized protocols for how every annotation was performed. In 2026, an audit trail of “who labeled what and how” is as important as the model itself.

- Robust Quality Control (QC): A multi-stage QC process must be in place.

This includes:

- Inter-Annotator Agreement (IAA): For annotation to be consistent, SOP development is necessary to streamline the annotation and segmentation workflow

- Final Review by Key Opinion Leaders (KOLs): Senior clinical experts validate the training sets to ensure the “gold standard” is truly gold.

- Model Accuracy Metrics: The model’s performance on the validation set is rigorously measured using specialized metrics like Dice Similarity Coefficient (DSC) for segmentation accuracy.

A non-compliant development process makes deployment impossible, regardless of the model’s technical merit. Compliance is the bridge that turns a promising algorithm into a marketable, safe medical product.

Phase 5: Transition to the Operating Room (The Deployment)

The deployment phase itself involves moving the validated model into the clinical environment. This is not simply about running code; it’s about clinical integration.

The Anatomy of a Surgical AI Deployment:

- Cloud-to-Edge Infrastructure: While training happens in the cloud, clinical inferencing often happens on “the edge”—on high-performance servers located within the hospital or integrated directly into surgical hardware (e.g., a visualization system or surgical robot). This minimizes latency, which is critical for real-time intra-operative guidance.

- Integration with Hospital Systems: The AI system must seamlessly integrate with the hospital’s RIS/PACS (Radiology Information System / Picture Archiving and Communication System) to ingest new scans and store its results. This integration relies on standards like DICOM and HL7/FHIR.

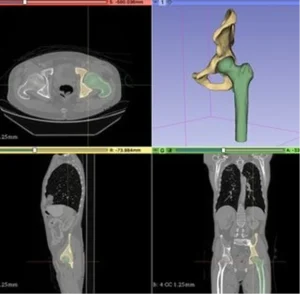

- The Generation of Surgical Aids (where 3D modeling thrives): Once the AI segments a patient’s pre-operative scan, its high-fidelity output used to automatically generate customized 3D models.

These models can be:

- 3D Printed: For tactile surgical planning, patient education, or creating patient-specific implants.

- Integrated into Augmented Reality (AR): For projecting anatomical overlays directly onto the patient or the surgeon’s view (e.g., overlaying a virtual model of a tumor onto the live laparoscopic feed).

- Used for Robotic Path Planning: Guiding a robotic surgical arm to follow a precise, pre-defined path based on the 3D map.

The accuracy of the initial segmentation (from Phase 2) directly dictates the accuracy and safety of these critical downstream applications.

4. Clinical Validation and Post-Market Surveillance: Final deployment includes rigorous clinical trials proving real-world safety and efficacy before implementation in healthcare settings.

Post-market surveillance monitors performance on new patients, feeding insights into the data-annotation-training cycle for continuous improvement through MLOps.

How We Empower Your Deployment Journey

We accelerate your medical AI projects with expert image annotation, segmentation, and quality control services. We provide the essential, high-quality “fuel” that powers the entire deployment ecosystem.

Our Core Value Prop:

- Unmatched Medical Expertise: Our annotation teams not crowdsourced; they credentialed medical professionals (radiologists, anatomists, and specialized technicians) who bring clinical nuance and anatomical precision to every segmentation task. They don’t just segment structures; they understand the anatomy and pathology being visualized.

- Commitment to Accuracy (for 3D Modeling): We are the specialists in achieving the extreme accuracy required for creating patient-specific 3D models and guides. We understand converting segmentations into volumes and apply rigorous quality control protocols to ensure data accurate and fully 3D-ready.

- Audit-Ready Compliance and Platform Integration: We operate with a transparency and documentation standard built for FDA and EU MDR compliance. Our data provenance reports, annotation protocols, and QC metrics provide the audit trail that regulatory bodies demand. We integrate seamlessly with your chosen Data Labeling and MLOps platforms, ensuring a smooth, secure data flow.

- Specialized Tooling Mastery: Our teams proficient in the advanced volumetric, 3D, and interpolation tools found in leading medical imaging platforms, ensuring that complex structures (e.g., vessel trees, nervous systems, multi-part tumors) segmented with high-fidelity across entire 3D datasets.

Conclusion: From Blueprint to Bedside

The deployment of medical AI in surgery will transform healthcare by 2026. However, the path structured and demands uncompromising quality. Success comes from a collaborative ecosystem, not a single “black box” algorithm, built on a strong and reliable data foundation.

Expert-driven medical image segmentation and quality control ensure accuracy, build trust, maintain compliance. Enable safe transition to real clinical environments.

We partner with you to deliver AI that precise, reliable, compliant, and ready for real-world clinical deployment.

1. Q: What defines “Successful” Surgical AI deployment in 2026?

Ans: Success measured by Clinical Utility and Seamless Integration. A successful deployment delivers real-time, actionable insights, identifying critical structures or predicting surgical margins with over 98% segmentation accuracy.

Beyond algorithms, it requires integration with Hospital Information Systems, ISO compliance, and reduced surgical variability or operative time.

2. Q: How does segmentation accuracy affect 3D print tolerances for surgical guides?

Ans: Inaccurate segmentation creates “geometric noise.” A 1 mm deviation in 2D contouring can result in a 3–5% volumetric error in 3D models. For orthopedic or cranial guides, this leads to poor mechanical fit, potentially causing intra-operative misalignment or hardware failure.

3. Q: Why “Human-in-the-Loop” (HITL) mandatory for surgical AI training?

Ans: Fully automated models often fail at “edge cases” like post-traumatic anatomy or rare pathologies. HITL ensures clinical experts validate every boundary, delivering accurate “ground truth” for high-risk operating room environments.

4. Q: How does Pareidolia Systems support AI deployment?

Ans: Pareidolia Systems LLP enables healthcare AI deployment through infrastructure integration, scalable strategies, and responsible AI implementation.